AI Usage Monitoring in the Workplace: How Organizations Can Manage Generative AI Risk

by

Bec May

In 2025, nearly 100,000 shared ChatGPT conversations, including business discussions, internal communications, and personal queries, were discovered indexed in Google search results after users unknowingly enabled a public sharing feature. The incident highlighted an emerging challenge for organizations adopting generative AI. As employees increasingly experiment with AI tools in everyday work, sensitive information can easily leave corporate systems without teams realizing it.

Artificial intelligence is no longer breaking news. Across technology, design, manufacturing, government, finance, marketing, sales, and HR, employees are using AI systems to support daily tasks. Staff rely on artificial intelligence to summarise reports, draft emails, analyse spreadsheets, write code, and solve operational problems faster than ever before.

The issue is not simply whether artificial intelligence is being used. In many organizations, AI adoption is already well underway. The real challenge is visibility. Businesses need to understand which AI tools employees are accessing, what information may be entering those systems, and how AI platforms interact with corporate data.

The productivity gains are real. Research cited by Forbes suggests generative AI can improve productivity by 14–55 percent at the task level, helping teams automate repetitive work and analyse information more quickly. Tasks such as document analysis, problem solving, data summarization, and code debugging that once took hours or days can now be completed in minutes.

But the rapid adoption of AI has also created a new visibility challenge for many organizations. Many businesses now need ways to monitor AI usage across their networks to understand which AI tools employees are accessing and how those systems interact with corporate data.

Most businesses have limited insight into which AI tools employees use, how often they use them, and what information they enter into those systems. Staff may paste confidential information into prompts, upload internal documents for analysis, or connect internal systems to external AI tools without fully understanding the potential security and compliance implications.

Without monitoring AI usage, organizations develop a growing blind spot around one of the fastest-moving technologies in the workplace.

Understanding how AI tools are accessed across corporate networks is becoming essential for maintaining data security, protecting intellectual property, and ensuring compliance with privacy and regulatory requirements.

Many organizations are surprised to discover how widely AI tools are already being used across their networks. With the right firewall reporting and monitoring in place, it is possible to quickly identify which AI platforms are being accessed and how they are being used.

What is AI Usage Monitoring in the Workplace?

AI usage monitoring refers to the process of tracking how employees access and interact with artificial intelligence tools across organizational systems and networks.

This may include identifying which AI platforms are accessed via firewall reporting software, analyzing usage patterns, logging interactions, and detecting potential security or compliance risks associated with generative AI tools.

Organizations are increasingly turning to AI monitoring to maintain visibility into AI adoption, protect sensitive data, and ensure responsible use of artificial intelligence systems in the workplace.

Key Risks of Unmonitored AI Usage

Organizations that do not monitor AI usage may face several emerging risks.

Employees entering confidential information into public AI tools

Shadow AI tools used without IT approval

Intellectual property exposure through prompts or document uploads

Compliance violations involving regulated data

Automated AI agents interacting with internal systems without oversight

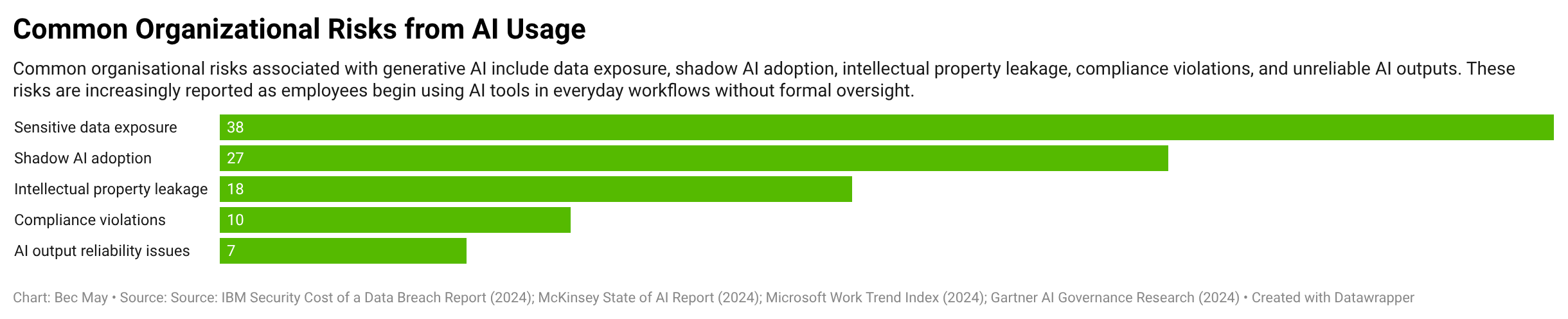

This chart highlights the most common organisational risks associated with generative AI adoption, including data exposure, shadow AI usage, intellectual property leakage, and compliance violations as employees increasingly experiment with AI tools in everyday work.

Source: IBM Security Cost of a Data Breach Report (2024); McKinsey State of AI Report (2024); Microsoft Work Trend Index (2024).

These fall into two main categories. Output risks and input risks, both of which entail compliance, reputational, and security considerations.

AI Input risks: Business data entering AI Systems

The most immediate risk associated with generative AI tools is the information employees provide to them.

Most AI platforms operate as external cloud services. When staff paste content into prompts, upload documents, or connect internal systems to AI tools, sensitive information may leave the organization’s controlled environment.

Unlike traditional enterprise software, many generative AI applications are publicly accessible web services. Employees can access and begin using them almost instantly, often without approval from IT, security, or leadership teams. This means organizations are often left with limited visibility into what sensitive information is being shared with those systems.

Several widely reported incidents have demonstrated how easily sensitive information can be exposed when employees use AI tools in everyday work without proper guardrails.

Confidential Data Exposure

One of the most common and easily overlooked risks occurs when employees paste internal information into AI prompts. Generative AI tools are fast, conversational, easy to access, and feel very similar to internal tools like Slack or messaging apps. A question goes into the prompt box, an answer appears seconds later, and the workflow continues. In this process, staff may paste internal information into AI prompts without considering where that data is going or how it may be stored, processed, or reused by the AI provider. When was the last time someone in your team pasted live customer data into ChatGPT or a similar tool? Asking this question internally can help organizations reflect on just how easily and frequently sensitive information might find its way into AI systems.

Examples include:

Financial forecasts of internal reports are being pasted into a chatbot for summarization

Customer records or personally identifiable information (PII) included in prompts

Source code or product documentation shared for troubleshooting

Internal policies or strategy documents uploaded for analysis

In 2025, thousands of ChatGPT conversations appeared in Google search results after users unintentionally enabled the platform's public link feature. Some of those conversations included sensitive personal information, internal business ideas, and private queries that users never intended or expected to be publicly searchable.

The exposure occurred because shared chat links could be indexed by search engines when discoverability was enabled. Once indexed, the prompts and responses within those conversations could appear in Google search results.

OpenAI later removed the feature following privacy concerns and worked with search engines to remove indexed conversations. However, the less for organizations remains; once information is entered into an external AI platform, it may be stored, shared, or exposed in ways that are difficult to predict.

Monitoring AI systems usage at the network level helps organizations detect when internal data is being submitted to AI platforms, identify the users involved, and establish clearer governance around how generative AI tools are used in the workplace.

Intellectual property loss

Generative AI tools are increasingly used by developers, engineers, and analysts to debug code, review technical material, analyze datasets, and troubleshoot problems.

However, entering proprietary information into external AI platforms can create significant data security risks.

Examples may include:

Proprietary source code

Engineering documentation

Internal research data

Product designs and diagrams

Confidential strategy documents and trade secrets

Once submitted to public AI tools, this information leaves the organisation’s controlled environment. Depending on the platform’s policies, prompts may be stored, reviewed, or used as training data for AI models, thereby reducing organizations' visibility into how proprietary information may be retained or processed.

This risk has already occurred in real organizations. In 2023, Samsung engineers unintentionally exposed confidential source code and internal meeting notes after entering the information into ChatGPT while troubleshooting software issues. The incident raised concerns about the loss of intellectual property and data leakage, prompting the company to restrict the use of generative AI internally.

As AI assistants become embedded into development environments and other AI applications, monitoring AI tool usage is becoming an important part of protecting intellectual property and maintaining data security.

Regulatory Compliance Violations/Breaches

Aside from the data loss risks mentioned above, entering regulated data into AI models can also create serious compliance risks.

Many organizations operate under strict privacy laws governing the handling of sensitive information. Regulations such as the General Data Protection Regulation (GDPR) in Europe and the California Consumer Privacy Act (CCPA) require organizations to maintain control over how customers' personal data is collected, processed, and transferred.

If employees paste regulated data into public AI tools while analyzing customer records, legal documents, or internal reports, organizations may inadvertently breach these compliance requirements.

Regulators have already begun examining how AI platforms handle personal information. In 2023, the Italian data protection authority temporarily banned ChatGPT while investigating whether the platform complied with GDPR requirements around data collection transparency and lawful processing.

The investigation highlighted growing regulatory scrutiny of artificial intelligence systems and how they process sensitive information.

AI output risks: Content and behaviour generated by AI

Generative AI systems also introduce risks related to the content they produce or the actions they trigger.

Because Large Language Models (LLMs) generate responses probabilistically rather than retrieving verified facts, their outputs are not reliable.

AI hallucinations and inaccurate information

AI systems can, and do, generate responses that appear credible, but contain incorrect or fabricated information presented as fact. These outputs are commonly referred to as AI hallucinations (also called bullshitting, confabulation, or delusion).

As a result, AI systems may generate non-existent citations, fabricate legal cases, misinterpret data, or present speculative information with high confidence. Without human verification, these responses can introduce significant operational, legal, or reputational risks.

One of the most widely reported examples of these hallucinations causing serious credibility issues occurred during the Mata v. Avianca case in the United States. A lawyer used ChatGPT to assist with legal research and submitted a court filing that cited multiple legal cases generated by the AI system. When the court attempted to verify the references, it discovered that several of the cited cases were entirely fabricated.

The judge later sanctioned the legal team, highlighting the risks of relying on AI-generated information without verification. Experts went on to caution that lawyers cannot reasonably rely on the accuracy, completeness, or validity of content generated by AI tools.

As generative AI tools become more integrated into everyday workflows, these risks extend beyond the legal sector. AI-generated inaccuracies can affect financial analysis, technical documentation, software development, and strategic decision-making if outputs are used without verification.

Prompt Injection and AI system manipulation

Generative AI systems can also introduce new cybersecurity risks when they are connected to internal documents, knowledge bases, or business systems.

One emerging attack technique is prompt injection, in which malicious instructions are embedded in text, documents, or websites to manipulate an AI system's behaviour.

This becomes particularly relevant when employees use AI assistants to analyse

In a 2023 paper titled Prompt Injection Attacks Against LLM-Integrated Applications," security researchers showed how malicious prompts embedded in webpages could instruct AI systems to retrieve information from connected services and include it in their responses.

In one widely reported example, a Stanford student showed that Microsoft’s Bing Chat could be tricked into revealing its hidden system instructions by asking the chatbot to ignore its previous instructions. The system disclosed parts of its internal prompt configuration that were meant to remain private.

When AI assistants are connected to internal tools such as document repositories, email systems

As AI tools become integrated with corporate documents, databases, and APIs, these vulnerabilities highlight how quickly sensitive information could be exposed if AI activity is not properly monitored.

Benefits of Monitoring AI Usage in the Workplace

Monitoring AI usage allows organizations to gain the benefits of AI adoption while still maintaining visibility and control over how corporate data interacts with these systems. Key benefits include:

Data Security Monitoring AI usage helps organizations detect when sensitive data may be entering external AI platforms. By tracking which AI tools employees access and analysing usage patterns, security teams can identify potential data leakage early and maintain stronger control over confidential information and intellectual property.

Regulatory Compliance Many organizations must demonstrate how regulated data is processed and shared. Monitoring AI systems creates audit trails and detailed logs of AI interactions, allowing organizations to investigate incidents, respond to regulatory inquiries, and demonstrate compliance with privacy laws and industry regulations.

Visibility into AI Adoption AI tools often spread across departments before governance policies are established. Monitoring tools provide a unified view of which AI platforms employees access, how often they use them, and how usage trends change over time. This visibility helps organizations understand where AI is being adopted and where training and education may be required.

Detection of Technical Risks Continuous monitoring of AI systems can also reveal technical risks within AI models. Tracking AI interactions and system behaviour allows organizations to detect issues such as model drift, unreliable outputs, or unusual activity that may indicate misuse or manipulation of AI systems.

While monitoring AI usage offers clear benefits, organizations must also address several operational and governance challenges when managing artificial intelligence in the workplace.

How Businesses Monitor AI Usage

As organizations begin addressing the risks associated with generative AI, many are looking more closely at how AI tools appear in their network activity. As incidents involving sensitive data exposure through AI tools began appearing in the news, many organizations started reviewing firewall logs to understand whether employees were accessing AI platforms and whether corporate data might be leaving the network.

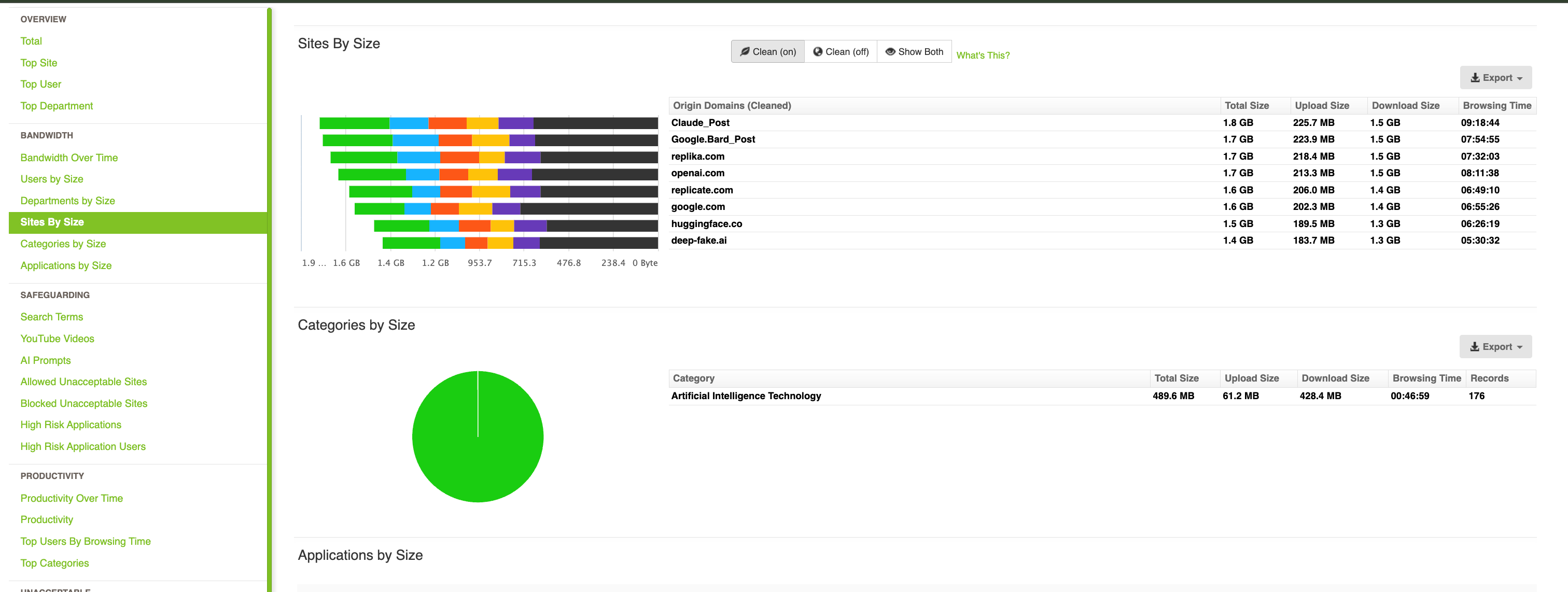

Monitoring AI usage in the workplace does not require direct access to the AI platforms themselves. In most organizations, the fastest way to understand AI activity is through network visibility.

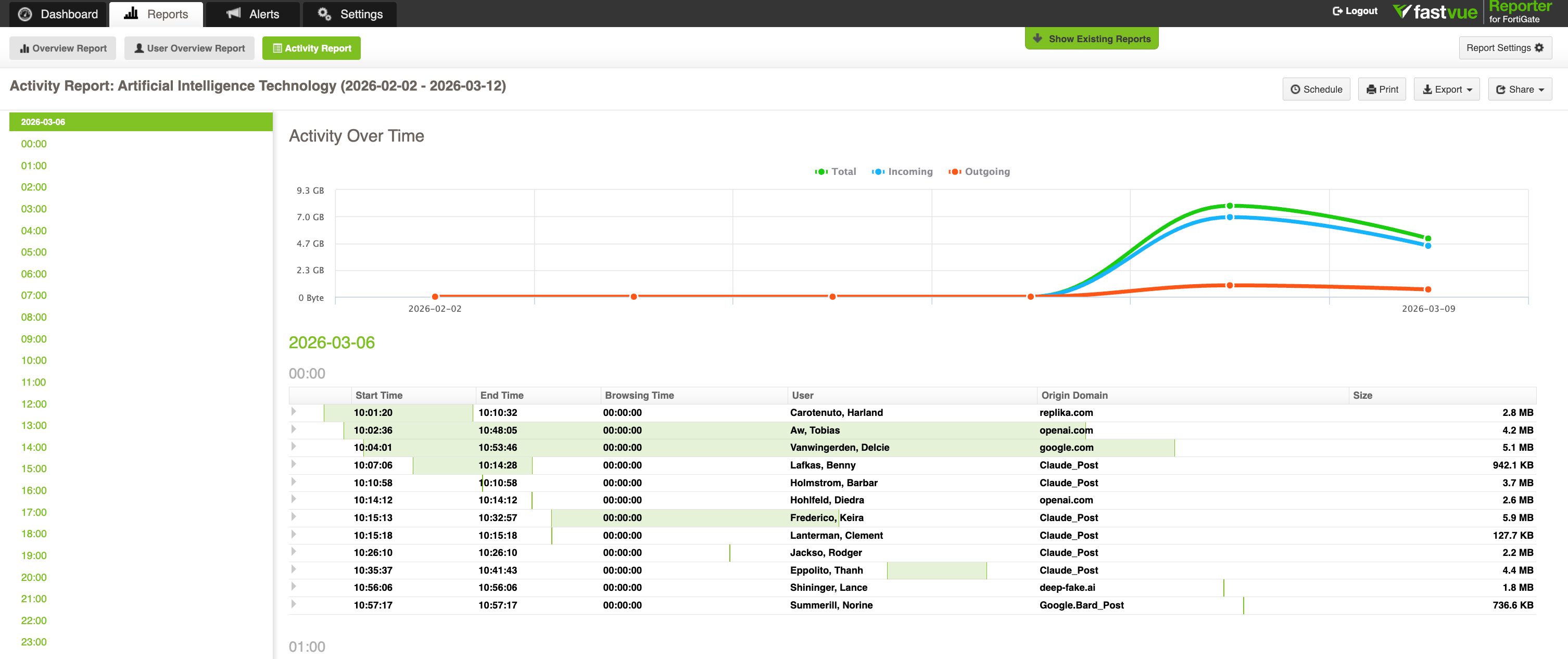

When employees access AI tools such as ChatGPT, Microsoft Copilot, or Google Gemini, those interactions generate network traffic. Firewalls and web gateways record this activity in log files that can be analysed to understand how AI systems are being used across the organisation. In most environments, these records are exported as firewall syslogs. When structured into dashboards and reports using tools such as Fastvue Reporter, these logs allow security teams to investigate AI activity across the network and identify potential data exposure.

When structured into reporting dashboards and alerts, these logs allow security teams to investigate AI activity in much greater detail.

1. AI platform detection

Identify which AI platforms are being accessed across the network. This allows IT teams to see whether employees are using approved tools or introducing shadow AI services without oversight

2. User-level activity visibility

User-level activity visibility allows security teams to see which users or departments are interacting with AI tools and how frequently those platforms are accessed. This helps organizations identify adoption trends, detect unusual activity, and investigate potential data exposure involving generative AI systems.

3. AI prompt and interaction logging

Some firewalls now log AI prompts or request metadata. Structured reporting can help security teams identify situations where sensitive information may have been entered into AI tools. For example, platforms such as FortiGate can capture AI prompt activity, which can then be analysed using tools designed for monitoring AI prompts and interactions across firewall logs. Security teams can use this visibility to investigate AI activity at the user level and understand how generative AI tools interact with corporate data.

4. Real-time alerts for potential risks

Monitoring systems can be configured to trigger real-time alerts when AI activity occurs, such as large uploads to AI platforms or sudden spikes in usage of AI tools.

5. Investigation-ready audit trails

Detailed logs allow security teams to reconstruct events in the event of a data exposure or compliance incident. This is particularly important when investigating cases involving confidential information, intellectual property, or regulated data.

Understanding how AI usage appears in network activity is the first step. The next challenge for organizations is managing how these tools are governed and used across the workplace.

Challenges of Managing AI in the Workplace

While artificial intelligence offers significant opportunities for businesses, managing AI use across an organisation introduces several operational and governance challenges.

1. Rapid and Uncontrolled AI Adoption

AI tools are often adopted faster than organizations can establish governance frameworks. Because many AI platforms are easily accessible through web applications or built into existing software, employees may begin experimenting with AI tools long before policies or oversight mechanisms are in place.

This creates a visibility gap for IT and security teams attempting to understand how artificial intelligence is being used across the organisation.

2. Establishing Clear Governance

Many organizations are still developing governance frameworks for artificial intelligence. Determining which AI tools are approved, how data should be handled, and where human oversight is required can be complex, particularly in large organizations operating across multiple departments.

Without clear governance policies, employees may make inconsistent decisions about when and how to use AI systems.

3. Maintaining Transparency and Trust

Monitoring AI usage can raise concerns among employees if it is not implemented transparently. Organizations should communicate clearly about why AI monitoring exists, what activity is being monitored, and how the information will be used.

Transparent communication helps build trust and ensures monitoring is seen as a governance safeguard rather than workplace surveillance.

4. Maintaining Human Oversight

AI systems can support decision-making, but they should not replace human judgment in critical situations. Organizations must ensure that employees review AI-generated outputs and remain accountable for decisions influenced by AI tools. A helpful framework to consider is the 30% rule.

Maintaining this human-in-the-loop approach is essential for responsible and ethical use of artificial intelligence.

5. Workforce Skills and Adaptation

As AI tools become embedded in everyday workflows, organizations must ensure that employees continue to develop professional expertise rather than relying entirely on automated outputs.

Training programs that focus on responsible AI use, prompt literacy, and critical evaluation of AI-generated content can help organizations maintain strong workforce capabilities while benefiting from AI technologies.

Similar visibility challenges are emerging in educational environments, where schools are beginning to review how generative AI tools appear on school networks and how monitoring AI use supports safeguarding.

A Practical Framework for Managing AI in the Workplace

For many organizations, the challenge is not simply deciding whether to allow AI tools in the workplace. In most environments, employees are already experimenting with artificial intelligence systems as part of their daily work.

Managing AI responsibly requires a structured approach that combines governance policies, oversight, and employee training.

A practical framework for managing AI usage typically includes four core components:

1. Visibility

Organizations must first understand which AI platforms are being accessed across their networks. Monitoring tools and firewall reporting can reveal which AI systems employees interact with, how frequently those tools are used, and whether sensitive data may be leaving the network.

2. Governance

Clear policies define how artificial intelligence tools should be used within the organisation. This includes identifying approved AI platforms, defining acceptable use cases, and establishing rules for handling sensitive or regulated data.

3. Human Oversight

AI systems should support decision-making rather than replace human judgment. Employees should review critical outputs generated by AI tools to ensure accuracy, ethical standards, and accountability.

4. Continuous Monitoring

AI usage and AI system behaviour should be monitored over time. Continuous monitoring helps organizations detect unusual activity, track usage trends, and identify emerging risks as artificial intelligence adoption grows.

Together, these elements help organizations balance the benefits of AI tools with the governance and oversight required to manage risk effectively.

Implementing AI Governance and Usage Policies

Monitoring AI use is a fundamental piece of the responsible AI adoption puzzle; it is just that, one piece. Organizations need clear governance policies that define how artificial intelligence systems should be used within the workplace.

Many enterprises are establishing cross-functional governance structures to oversee AI deployment. These committees often include representatives from IT, security, legal, HR, and ethics teams to review potential risks and ensure that AI systems align with legal and ethical standards.

One of the most important steps is creating an AI Acceptable Use Policy (AUP). This policy should define:

Approved AI platform employees may access

Which public AI tools are prohibited

Rules for handling sensitive, proprietary, and regulated data

Expectations around human review of AI-generated content

Human oversight is probably the most important of these. For decisions that affect customers, employees, or financial outcomes, organizations should require human review before acting on AI-generated outputs.

Effective governance also requires ongoing evaluation of how AI systems are performing in real-world use. Teams should regularly review whether AI tools are producing accurate, reliable, and fair results, particularly in sensitive areas like finance or customer decisions.

Simple practices such as periodic reviews, bias checks, and clear human oversight help ensure that AI systems continue to behave as expected and align with organisational policies.

AI Monitoring Checklist for Businesses

As artificial intelligence becomes embedded in everyday workflows, organizations need practical steps to maintain visibility and control over how AI tools interact with corporate data.

The following checklist provides a simple framework for monitoring AI usage in the workplace.

1. Identify AI tools appearing on your network.

Start by understanding which AI platforms employees are accessing across your network. Firewall reporting software and network monitoring tools can reveal whether platforms such as ChatGPT, Microsoft Copilot, Google Gemini, Claude, or Perplexity are appearing in network activity.

This visibility helps IT and security teams identify which AI services are already in use, detect shadow AI tools, and understand how generative AI is spreading across different departments.

Once organizations can identify which AI tools appear in network logs, they can begin establishing governance policies and usage guidelines.

2. Create an AI Acceptable Use Policy

Define which AI tools are approved for business use and which should be restricted. Policies should also clarify how employees should handle confidential information when interacting with AI systems.

3. Communicate the need to protect sensitive and regulated data

Employees should understand that financial data, customer records, proprietary code, and internal documents should not be entered into public AI tools without appropriate safeguards in place.

4. Maintain human oversight of AI outputs.

AI systems should assist decision-making rather than replace it. Outputs that influence product design, customer interactions, or legal or financial decisions should always be reviewed by employees.

5. Track AI usage patterns

Using monitoring tools can reveal how frequently AI platforms are accessed, which departments use them, and whether usage patterns change over time.

6. Maintain audit trails

Detailed logs of AI interactions enable organizations to investigate potential data-exposure incidents and demonstrate compliance with regulatory requirements.

7. Review AI usage regularly

AI adoption evolves quickly. Periodically reviewing usage trends and governance policies helps organizations maintain visibility as new tools and AI capabilities emerge.

The Rise of AI Agents and Autonomous Workflows

Another development increasing the need for visibility is the rise of AI agents.

Unlike traditional prompts, AI agents combine large language models with software applications that can act independently to complete tasks. These systems may research information, respond to customer inquiries, schedule meetings, analyse documents, or interact with other systems on behalf of employees.

According to Deloitte’s Global 2025 Predictions Report, 25 percent of enterprises using generative AI plan to deploy AI agents by 2025, growing to 50 percent by 2027.

As organizations integrate these agents into everyday workflows, the volume of data exchanged between AI platforms and corporate systems will increase significantly.

Recently reported security incidents are bringing the severity of these risks to light. Researchers discovered a vulnerability in the open-source AI agent OpenClaw. To initiate an attack, an attacker only needed to trick the victim into visiting a malicious website once. The flaw then allowed the attacker to take control of the agent and execute actions on the user’s system, demonstrating how autonomous AI tools interacting with external websites can create entirely new attack surfaces.

Because AI agents can access files, execute commands, and interact with other systems on behalf of users, visibility is even more important for security teams. Monitoring how AI tools and AI-driven systems interact with corporate networks helps organizations detect unusual behaviour early and maintain stronger control over how sensitive information is handled.

Compliance Risks of Unmonitored AI Use

For many organizations, the risks associated with generative AI extend beyond data security. They also create regulatory compliance obligations.

Many industries operate under strict laws governing how personal, financial, and health information is collected, processed, and shared. When employees enter sensitive data into external AI platforms, organizations may unintentionally violate these compliance requirements.

Unlike traditional enterprise software, most generative AI platforms operate as external cloud services. When employees paste information into AI prompts, that data may be transmitted to third-party systems outside the organisation’s control. Without monitoring AI usage, businesses may have little visibility into how often regulated data is being entered into AI systems.

Several major regulatory frameworks already apply to these situations.

GDPR and Data Protection Requirements

The General Data Protection Regulation (GDPR) in the European Union requires organizations to maintain strict control over how personal data is processed and transferred to third parties.

If employees enter personal data, such as customer records, employee information, or contact details, into external AI tools, this may constitute an unauthorised data transfer under the GDPR.

Organizations found to be in violation can face steep penalties.

GDPR allows regulators to issue fines of up to £20 million or 4 percent of global annual turnover, whichever is higher.

HIPAA and Healthcare Data

Businesses operating in the US healthcare environment are subject to obligations under the Health Insurance Portability and Accountability Act (HIPAA).

If protected health information (PHI) is entered into public AI platforms without appropriate safeguards or business associate agreements, organizations may violate HIPAA security and privacy rules.

HIPAA violations can result in penalties ranging from $100 to $50,000 per violation, with an annual maximum of $1.5 million or more, depending on the severity of the breach.

Financial and Industry Regulations

Financial institutions, government agencies, and critical infrastructure providers may also be subject to additional compliance frameworks, including:

• PCI DSS for payment card data

• SOC 2 requirements for service providers

• SEC cybersecurity disclosure rules

• National privacy laws such as the California Consumer Privacy Act (CCPA)

These regulations often require organizations to maintain audit trails, access controls, and monitoring systems that demonstrate how sensitive data is handled.

If employees enter regulated data into AI platforms without oversight, organizations may struggle to demonstrate compliance during regulatory investigations or security audits.

What are the most common AI Tools Employees Access on Corporate Networks?

Generative AI platforms are now widely accessible via web applications and cloud services, allowing employees to use them instantly without IT involvement.

This rapid accessibility has led to widespread workplace adoption. Azumo Research shows that 58% of employees now report regularly using AI tools at work, with roughly one-third using them weekly or daily.

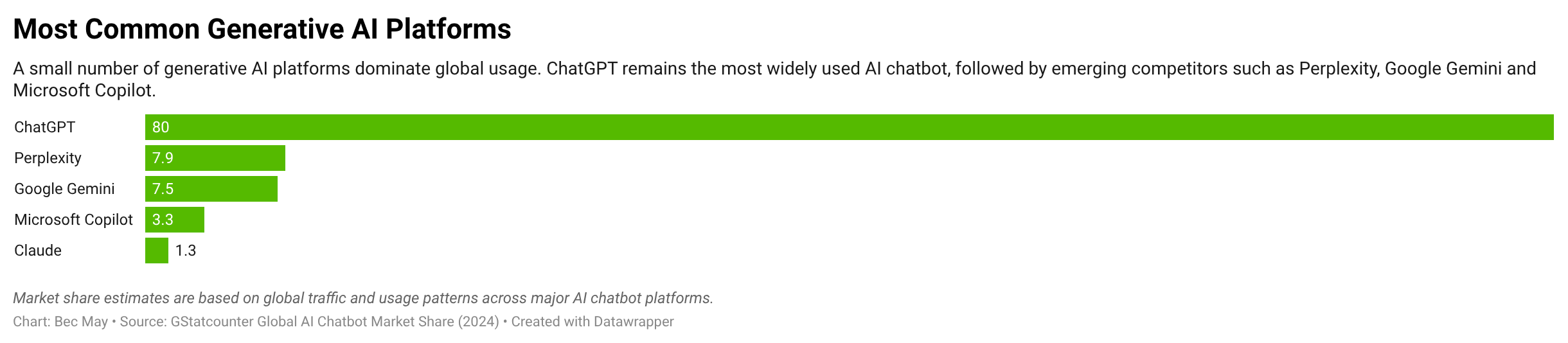

At the same time, the generative AI ecosystem has quickly consolidated around a small number of dominant platforms.

Common AI platforms appearing on corporate networks include:

ChatGPT

Microsoft Copilot

Google Gemini

Claude

Perplexity

The chart above illustrates how concentrated generative AI usage has become. A small number of platforms account for the majority of global activity, with ChatGPT dominating usage and newer tools such as Perplexity, Google Gemini and Microsoft Copilot rapidly gaining adoption.

Source: StatCounter Global AI Chatbot Market Share (2024); SimilarWeb Generative AI Traffic Trends (2024–2025).

Gaining Visibility into AI Usage

Generative AI is already embedded in the modern workplace. Employees are experimenting with AI tools, integrating them into workflows and increasingly relying on them to solve everyday problems. In many organizations, this adoption is outpacing governance frameworks or security controls.

The challenge for IT and security teams is not simply deciding whether to use AI. In most environments, the question has already been answered.

The real challenge is visibility.

Understanding which AI tools employees access, how frequently they use them, and whether sensitive information is shared with those systems is becoming a critical part of modern security and compliance practices. As AI agents, integrations, and automated workflows become more common, visibility becomes even more important.

Tools like Fastvue Reporter help organizations transform firewall logs into structured reports, dashboards, and alerts, allowing teams to quickly understand how AI platforms are being used across the network and investigate potential risks.

If you want to understand how AI tools are appearing in your environment, start by reviewing your firewall reporting and network visibility. Identifying AI usage patterns early helps organizations reduce blind spots, strengthen governance, and safely adopt the benefits of artificial intelligence.

Contact us today to explore how Fastvue Reporter can help you gain visibility into AI activity across your network.

FAQs

Don't take our word for it. Try for yourself.

Download Fastvue Reporter and try it free for 14 days, or schedule a demo and we'll show you how it works.

- Share this storyfacebooktwitterlinkedIn